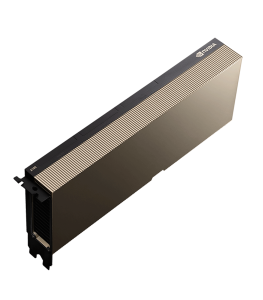

NVIDIA Ampere A100 Tensor Core GPU 80 GB PCIe 4.0 Graphic Card – Dual Slot

$140.50

Product information "NVIDIA Ampere A100 Tensor Core GPU 80 GB PCIe 4.0 Graphic Card – Dual Slot"

Features

The NVIDIA Ampere A100 Tensor Core GPU 80 GB PCIe 4.0 Graphic Card represents a significant leap in the evolution of compute performance, aimed directly at the most demanding workloads in artificial intelligence, machine learning, deep learning, scientific simulations, and big data analytics. Built on the Ampere architecture, the A100 is engineered to be the universal accelerator—capable of delivering unmatched throughput and efficiency across a diverse range of compute-intensive applications. Unlike traditional GPUs that are focused primarily on rendering, the A100 is designed as a data center-class powerhouse, where parallel processing and tensor computation are paramount. It provides the foundational performance backbone for AI research labs, enterprise machine learning pipelines, and HPC (High-Performance Computing) environments, where every second of compute time matters.

The NVIDIA Ampere A100 Tensor Core GPU 80 GB PCIe 4.0 Graphic Card represents a significant leap in the evolution of compute performance, aimed directly at the most demanding workloads in artificial intelligence, machine learning, deep learning, scientific simulations, and big data analytics. Built on the Ampere architecture, the A100 is engineered to be the universal accelerator—capable of delivering unmatched throughput and efficiency across a diverse range of compute-intensive applications. Unlike traditional GPUs that are focused primarily on rendering, the A100 is designed as a data center-class powerhouse, where parallel processing and tensor computation are paramount. It provides the foundational performance backbone for AI research labs, enterprise machine learning pipelines, and HPC (High-Performance Computing) environments, where every second of compute time matters.

Ampere Architecture: More Than Just a Core Upgrade

At the heart of the A100 lies NVIDIA’s second-generation Ampere architecture, which brings a suite of architectural enhancements over its predecessor, Volta. These include third-generation Tensor Cores and second-generation Multi-Instance GPU (MIG) capabilities, enabling finer segmentation of GPU resources for better workload consolidation. The A100 also introduces support for Tensor Float 32 (TF32), bfloat16, FP64, and int8/int4 precision formats, making it extremely flexible across a spectrum of use cases. Its Tensor Cores are specifically designed to accelerate deep learning model training and inference tasks exponentially faster than traditional FP32 operations. Whether training massive natural language processing models like GPT or performing real-time inference for vision systems, the A100 ensures groundbreaking performance with high power efficiency. With double the performance per watt compared to Volta, Ampere enables both higher throughput and lower TCO (Total Cost of Ownership).

Massive 80GB HBM2e Memory: Built for Big Data

One of the most standout features of the A100 80GB PCIe version is its enormous 80GB of HBM2e (High Bandwidth Memory), running at a memory bandwidth of over 2 terabytes per second (TB/s). This massive memory pool allows for the acceleration of ultra-large models, enormous datasets, and complex simulations that were previously bottlenecked by memory constraints. Applications such as genome sequencing, seismic analysis, financial risk modeling, and transformer-based NLP models (like BERT and GPT-4) benefit significantly from this scale of high-bandwidth memory. Not only does the A100 handle larger batch sizes during training, but it also reduces the need for memory offloading, thus dramatically improving compute efficiency and reducing job runtime in GPU clusters.

PCIe 4.0 Interface for Scalable Integration

Unlike the SXM form factor which is often reserved for proprietary data center builds, the PCIe 4.0 interface of the A100 makes it highly accessible for mainstream server installations and workstation integration. PCIe Gen 4.0 doubles the bandwidth of PCIe Gen 3.0, enabling faster data transfer between the CPU and GPU. This ensures that the A100 can fully utilize its compute capabilities in systems that require high I/O throughput, such as multi-GPU servers or high-end desktop workstations for data science. It supports interoperability with AMD and Intel platforms alike, and it can be installed in dual-slot PCIe form factors, making it an ideal fit for custom AI rigs, academic HPC clusters, and enterprise server rooms. With scalable deployment in mind, system administrators can easily install multiple A100s per server to achieve powerful GPU clustering without the need for proprietary NVLink bridges.

Multi-Instance GPU (MIG) Technology: Efficiency Through Partitioning

The A100 introduces MIG (Multi-Instance GPU) functionality, allowing the single GPU to be partitioned into up to seven isolated GPU instances. Each of these instances behaves like a separate GPU with dedicated memory, cache, and compute resources. This revolutionary feature provides unmatched flexibility in multi-tenant environments, such as cloud platforms or shared research facilities, where resource isolation, QoS, and performance predictability are critical. With MIG, data centers can offer scalable GPU compute power to multiple users simultaneously without sacrificing performance integrity. For example, a single A100 can serve multiple Jupyter Notebook sessions running model inference or training jobs, thereby increasing GPU utilization while keeping energy and operational costs low. This is an innovation that aligns with the growing demand for AI democratization across organizations and institutions.

The A100 introduces MIG (Multi-Instance GPU) functionality, allowing the single GPU to be partitioned into up to seven isolated GPU instances. Each of these instances behaves like a separate GPU with dedicated memory, cache, and compute resources. This revolutionary feature provides unmatched flexibility in multi-tenant environments, such as cloud platforms or shared research facilities, where resource isolation, QoS, and performance predictability are critical. With MIG, data centers can offer scalable GPU compute power to multiple users simultaneously without sacrificing performance integrity. For example, a single A100 can serve multiple Jupyter Notebook sessions running model inference or training jobs, thereby increasing GPU utilization while keeping energy and operational costs low. This is an innovation that aligns with the growing demand for AI democratization across organizations and institutions.

Unrivaled AI and HPC Performance

Performance metrics of the A100 put it in a class of its own. The GPU delivers up to 19.5 TFLOPs of FP32, 312 TFLOPs of Tensor Float 32 (TF32), and 1.5 TFLOPs of FP64 computing power. It also achieves over 1.2 PFLOPs of INT8 performance when performing inference tasks. These specs make the A100 the most potent accelerator for deep learning training and real-time inference at scale. It excels in workloads such as speech recognition, autonomous driving algorithms, medical image diagnostics, and real-time language translation. In the realm of HPC, it empowers molecular dynamics, quantum simulations, and fluid dynamics with precision and speed previously unattainable on single-GPU setups. The A100, especially in its PCIe 80GB variant, is not just about raw power—it’s about transformative performance per dollar and per watt.

Ideal for Large-Scale AI Frameworks

The NVIDIA A100 is purpose-built to support popular AI frameworks such as TensorFlow, PyTorch, MXNet, and RAPIDS. Leveraging the NVIDIA CUDA and cuDNN platforms, developers can unlock the full potential of the A100 without changing their existing workflows. The massive onboard memory and compute power enable researchers to train trillion-parameter models, run AI simulations in real-time, and explore deep reinforcement learning environments at scale. Furthermore, the A100 is a central component in NVIDIA DGX systems, which are used in some of the world’s most advanced AI research labs. It’s also a cornerstone of NVIDIA AI Enterprise, an end-to-end, cloud-native software suite optimized for VMware and Kubernetes, further expanding its deployment versatility across hybrid and multi-cloud architectures.

Enhanced Thermal Design and Dual-Slot Compatibility

The dual-slot design of the PCIe variant makes the A100 ideal for high-performance rackmount systems and workstation towers that need powerful cooling without excessive custom infrastructure. The card features a carefully engineered heat sink and fan shroud that allow for optimal airflow in server-grade environments. It’s designed to operate under heavy thermal loads without throttling, ensuring consistent performance even during 24/7 workloads. Power efficiency is equally impressive—drawing around 300W TDP, the card provides one of the best performance-per-watt ratios in the HPC market. Additionally, it supports advanced system monitoring through NVIDIA System Management Interface (nvidia-smi) and tools like DCGM (Data Center GPU Manager) to track thermals, utilization, and memory usage in real time. This allows system administrators to proactively manage performance and maintain high uptime for mission-critical environments.

The dual-slot design of the PCIe variant makes the A100 ideal for high-performance rackmount systems and workstation towers that need powerful cooling without excessive custom infrastructure. The card features a carefully engineered heat sink and fan shroud that allow for optimal airflow in server-grade environments. It’s designed to operate under heavy thermal loads without throttling, ensuring consistent performance even during 24/7 workloads. Power efficiency is equally impressive—drawing around 300W TDP, the card provides one of the best performance-per-watt ratios in the HPC market. Additionally, it supports advanced system monitoring through NVIDIA System Management Interface (nvidia-smi) and tools like DCGM (Data Center GPU Manager) to track thermals, utilization, and memory usage in real time. This allows system administrators to proactively manage performance and maintain high uptime for mission-critical environments.

Software Ecosystem and CUDA Compatibility

One of the reasons for the A100’s widespread adoption is its deep integration with the NVIDIA software stack, including CUDA 11+, cuBLAS, cuDNN, NCCL, and TensorRT. These libraries ensure that developers can take full advantage of the hardware capabilities without needing to reinvent codebases. For enterprise developers, the A100 also supports NVIDIA Triton Inference Server, which simplifies the deployment and scaling of AI inference models in production environments. With APIs for container orchestration, Kubernetes support, and Docker compatibility, the A100 is built for modern DevOps workflows. Its compatibility with virtualization tools also makes it ideal for multi-tenant AI model serving and VDI (Virtual Desktop Infrastructure) for data science teams. The software ecosystem around the A100 ensures that it’s not only a hardware investment but a fully integrated AI and HPC solution.

The Ultimate Future-Proof GPU for Enterprise AI

In conclusion, the NVIDIA Ampere A100 80GB PCIe 4.0 Tensor Core GPU is the apex of modern computational acceleration for AI and scientific computing. It’s not just a graphics card—it’s a computational engine that drives discovery, learning, and innovation across every major industry. Whether it’s used in pharmaceutical labs to accelerate drug discovery, in autonomous vehicle R&D to power real-time perception models, or in financial institutions to forecast markets with predictive analytics, the A100 provides the scale, speed, and stability required for the most advanced applications in the world. Its massive 80GB memory, unmatched tensor compute capability, PCIe flexibility, and robust software support make it a future-proof solution for organizations preparing for the AI era. For those who are serious about deep learning, HPC, and big data, the A100 is not just an upgrade—it’s the ultimate investment in next-generation compute infrastructure.

Login